My cloud, my way

I just finished to setup my personal cloud storage. It has been a long and difficult task and I'd like to share with people with similar requirements a bunch of useful information and pointers that would have save me a lot of time.

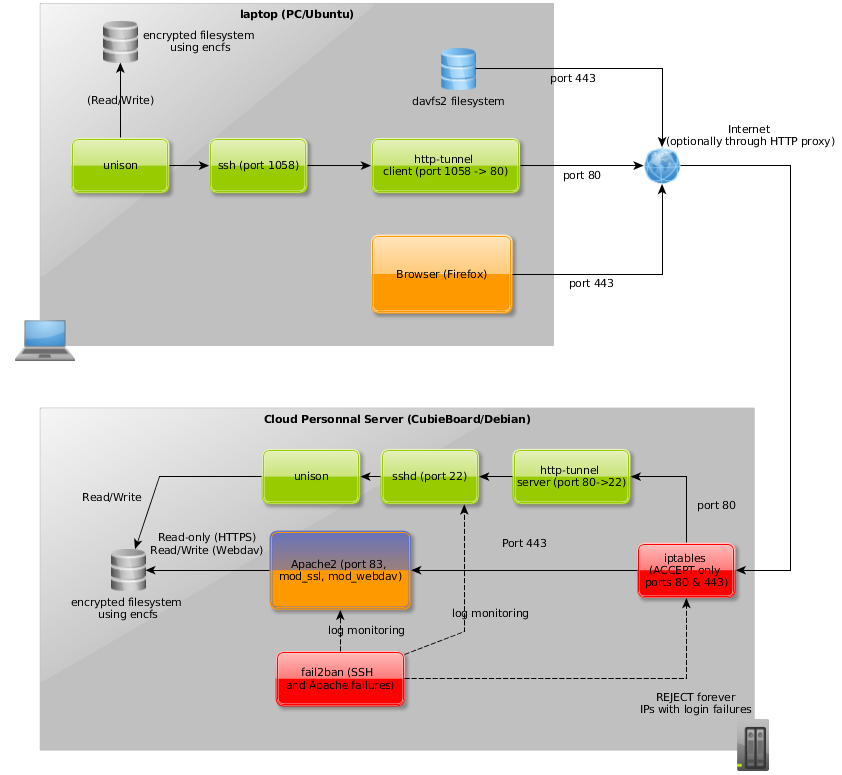

Summary diagram

Orange: HTTPS stream; Green: synchronization stream; Blue: Webdav stream; Red: security system

My requirements

- Safe : strongly encrypted storage for data and backups, encrypted communications, easy to backup and restore. Client-side encryption is optional.

- Ecological : reduced footprint, especially when dealing with the energy.

- Cheap : free or very low price for large amount of storage space (200 GB to 1 TB).

- Open : should run under the three main operating systems (Linux, Windows, OSX) ; HTTP proxy compliant; Available from anywhere using a simple web browser.

- Fast : I mean less than 10 minutes to detect changes from my 110 GB / 90K files. Low CPU consumption on the client side and on the server side appreciated.

Kinds of files in the cloud storage

Emerging file usage patterns I identified for me so far are :

- Exchange" : temporary storage to easily share files between computers. Synchronous writing. I use this typically when leaving the office to upload a document I want to work on at home from another computer and I want to make sure that the file is immediately uploaded into the cloud without having to wait for the next synchronization. Note that would be largely useless if I kept my computers online but I suspend them to save energy.

- "Pure cloud" : primary source is the cloud. Can be read/written from any node but the preferred node in case of conflict is the cloud itself. I use it for few TODO notes that should be available from anywhere. The synchronization can be asynchronous.

- "Archive" : same than "Pure cloud" but for archiving purpose only, few writes, few reads, files to kept. I use this to save some backups.

- "Unidirectional copy" : asynchronous copy of a directory into another node for read-only when off-line. I use this to get a copy of some directories located on the cloud only but sometimes required when offline (for instance I want on my office laptop a read-only snapshot of my personal notes uploaded from my personal laptop).

- "Unidirectional sync" : a directory is primary on a node (this node is preferred in case of conflict) and is asynchronously synchronized into the cloud and then possibly other nodes. The directory can be written only on the primary node. This is the main pattern I use for most of my data.

- "Bidirectional sync" : Shared directory between several nodes. Any node can read or write. I don't use this mode because my experience showed that it comes at the cost of numerous conflicts : if you have to edit files from an offline computers (on the train for instance), you quickly get conflicts. It is often too late to properly reconsiliate them when you figured out the problem. I prefer to use the "Pure cloud" pattern for files that can be written by several nodes. In the "Pure cloud" pattern, however, you can only access these files read-only when offline because they will be overridden by the cloud version at the next synchronization.

The different streams of the infrastructure

HTTPS using a browser

- Typical use case : I'm traveling and I want to watch/show a picture / an administrative asset etc.

- Usage frequency : low

- From where ? anywhere on the planet

- Requirements : a browser and a login/password

- Modalities : read-only, the files are browsed using the default Apache tree explorer.

- My experience : the navigation is so fast (even on my CubieBoard and my pretty low upload bandwidth) that I find this useful to find a document even from home.

Remote filesystem mount point

- Typical use case :

- Copying some files to backup, when I want to get sure to upload a file into the cloud without waiting for the next scheduled sync (when leaving office for instance)

- Performing filesystem operations against the mount point (count files, check size recursively, remove directories...)

- Editing a note file located on the cloud.

- Usage frequency : mounted at startup, pretty low effective usage (once or twice a day)

- From where ? office, home

- Requirements : a mounting software (I use davfs2)

- Modalities : works well even through a HTTP proxy. It works using a cache by design so the local and the remote files may not be different during a period of time, never use this for a synchronization (using sync or unison for instance) because it doesn't preserve time, see below "Note about Webdav".

- My experience : OK if you only use it for occasional use cases described previously. Comes with a significant latency that increase the time of the 'df' commands for instance. I plan to mount it only on demand and stop to mount it automatically at startup.

Local access to synchronized files

- Typical use cases : doing real work (like development) at home or office that can't afford low latencies when saving files.

- From where ? home, office.

- Usage frequency : always on in background.

- Modalities : sync every 1h30, the full sync of the entire collection takes from one to two minutes. Only the cloud contain all the data : on my office computer, I only store professional projects files and I only synchronize them to the cloud, same for my home computer with the personal stuff.

- My experience : works well but the merge/conflict priorities must be clear and forged into the sync commands. Never user bidirectional sync (see "Patterns : Kinds of files in the cloud storage") that can turn bad due to conflicts.

The solutions I tried during the last year

- SparkleShare : based on Git. As the website now states, it is good for small storage required (very good for that purpose) but Git is not designed for large binary storage so SparkleShare turns rapidly too slow to remain usable.

- Wuala : very good and clever, many features, client-side encryption but : 1) not open source so we have to trust them on the client-side encryption code about the fact that there is no backdoor included (difficult to believe nowadays ;-) ) 2) expensive.

- Owncloud : Pretty good, I now consider the release 5 as a serious solution, it meets all my criteria BUT is soooooo slow (on my CubieBoard, 1 Ghz ARM, SATA3 adapter)... Even when using a finely tunned MySql database (asynchronous IO among others things) instead of the packaged SQLite, it becomes very slow after few 10Ks of files mainly because of the high number of SQL queries it has to perform (not only when using the Web GUI but also when using the Webdav interface). The synchronization client 1.4 (for Seven and Ubuntu) is very slow (takes more than one hour to detect changes or fails in time out most of the time) and takes a significant amount of CPU (10 or 20%) even on powerful computers (i7, 4 cores). After a extensive use of Owncloud during several months I had to try another thing, too bad... I may give it another try in several years.

- Hand-crafted solution : I finally decided to solve the problem the Unix way, ie many small and powerful specialized tools chained one to the other and it finally works even better than excepted initially. See details bellow.

Not tested but not that far from my requirement

- Client-side encryption with EncFS + Dropbox/Hubic/Google Drive or others free storage services. The main problem are 1) the cost of the storage, free plans provides only few GB 2) The web GUI are unusable because all directories and files names are encrypted. You'll find a lot of tutorials and blogs about this solution on the Web.

- Seafile : Not tested because it is not compatible with HTTP proxies, looks promising on the paper.

Features I don't care about (but you may do)

- Directories/files sharing /groupware features like concurrent editing : most of modern tools like Owncloud support this.

- Version control (Owncloud is bundled with a plugin for that purpose). I still use a SCM (Git) for some directories (like source code or text notes) on the original source directory (and sometimes on the replicated locations) but I ignore the .git directories (which contain the local repository) so the source and the destination have their own local repository that doesn't collide (a git local repository is not intended to be shared among several computers)

Note about Webdav

- Webdav is an ancient technology re-emerging thanks to the cloud storage trend, most cloud providers comes with a Webdav connectivity.

- The good

-

It is based upon HTTP so HTTP-proxy compliant out of the box.

-

A distant Webdav service can be mounted under Linux (using davfs2) or the others OS.

-

The Bad : however, my conclusion is that this technology is not really reliable to build a cloud meeting my requirements :

- Time or rights are not preserved upon copy.

- Mainly due to previous restriction, the synchronization (using rsync or unison for instance) is not reliable and even dangerous.

- I observed sometimes (using davfs2) that some files existing on the server side are not visible from the client (even with a regular name).

- Webdav requires a cache on the client and comes with write latencies, often of several seconds or tens of seconds.

- Installation is often cumbersome, especially under Windows XP/Vista/Seven that comes with different bugs so we need to change the windows registry (I never ended to make it work under Seven).

- Webdav has a bad reputation when it comes about security but "Secure" Webdav, ie Webdav +Basic/Digest authentication under HTTPS looks enough (I'm not a security expert though).

-

Note about the hardware, a CubieBoard 1

-

Excellent lightweight device : a bit more expensive than a Raspberry but more powerful (1Ghz ARM CPU), more memory (512MB) and a SATA3 adapter to avoid using a slower USB connector.

-

My hdparm stats :

Timing cached reads: 796 MB in 2.00 seconds = 398.06 MB/sec Timing buffered disk reads: 326 MB in 3.00 seconds = 108.52 MB/sec

-

Note that a CubieBoard 2 has been recently made available, the main evolution is a dual core ARM CPU. Looks good but my CubieBoard 1 looks still enough for me alone.

-

The measured power consumption including the transformer goes from 3W (100% idle) to 6W (100% CPU + extensive IO usage)

-

The (excellent) tutorial I followed to install Debian is here

-

The bad :

- I had a lot of IO failures due to lack of power of the 2.5' hard disk. I finally found a solution : in addition to the regular 5V/0.5A power jack cable, I had to plug another USB cable into the female mini USB port : using this double power supplies, the SATA connector works like a charm.

- CPU is enough for a single person remote access (Apache, on-fly encryption, unison...) but not enough to compress tens GB of data when doing backups. I have to backup using a tar method, even gzip is far too slow and would take days (~1MB/sec). It's still OK because I have a very large volume of free disk.

- I regularly backup the system (about 1GB) using a microSD card stored in a safe place far from the server.

Note about EncFS

EncFS is a filesystem encryption program. It map a "real" filesystem with encrypted files to a userspace 'in memory' filesystem. It is very simple to use, stores the files encrypted file by file, even the directories and file names are encrypted. The encryption is very strong using the paranoia mode ("Cipher: AES Key Size: 256 bits PBKDF2 with 3 second runtime, 160 bit salt"according the man page).

- If an attacker or a burglar physically stoles the server, he has to unplug the server thus to shutdown it. Without the password, the data is safety encrypted on the hard disk and is lost for the attacker.

- Note that EncFS doesn't actually use your password to encrypt the files but actually uses a self-generated internal password itself encrypted using your password. It is cool because this way, you can change the filesystem password (EncFS provides some admin command for that), none file has actually to be encrypted again.

- Another cool thing with EncFS is that fact that even root can't access the filesystem, only the user that mounted the filesystem into its userspace (www-data when used in an Apache context) is able to.

- A last cool thing is that all the files are already encrypted for backup : one doesn't have to encrypt the files during the backup process (hopefully given the size of the data and my server CPU, it would be simply impossible in my case). The backup files can be stored on a regular filesystem as the data is already encrypted. Moreover, the per file EncFS encryption mechanism allows incremental backup (mandatory as well in my case).

- I also use EncFS to store my local files on laptop so the data is never available in clear all over the process (encrypted on my laptop, encrypted during the transfer using a strong SSL encryption and finally encrypted on the server side)

- The CPU overhead is minor. the EncFS process has some 60-80% CPU usage on the (fanless) server CPU during a short period of time when accessing files but I still get a lot of wait IO so the disk access is actually a greater speed limiter.

- The only (minor) drawback is the fact that one have to provide a password to mount the filesystem (done only once when booting the server).

About Unison

Unison is an excellent tool to synchronize two locations. It is simpler and more powerful than rsync for that special purpose. I initially tried to synchronize the local files on my laptop with the Webdav mount point but it has been a disaster for the reasons I explained before.

- Unison can also work over SSH but require a unison on the server side as well. This way, I assume Unison detects changes from the server and send only a final digest over SSH, it is impressively fast.

- I use cron or bash scripts with sleep loops for the synchronization scheduling.

- I configure unison to ignore paths in order to synchronize partial part of some directories located on the cloud into different nodes. For instance, let's say that I work at home on project 'p1' and at work on project 'p2', I want to get :

- On the cloud, all the projects : /mydata/myprojects/p1, /mydata/myprojects/p2

- On my personal laptop : /home/me/p1 (only 'p1' files, none 'p2' file)

- On my office laptop : /home/me/p2 (only 'p2' files, none 'p1' file)

The technical stack in use

-

Apache with SSL and Webdav modules

- The same Apache Virtual host for Webdav and HTTPS, the first is obviously Read-only, the second can be written or mounted.

- I use a RSA 4096 bits certificate to make the communication safer.

- The HTTPS virtual host is protected using a Digest Authentication password.

- I use port 80 (for a HTTP tunnel) and port 443 (for Webdav and plain HTTPS) because HTTP proxy usually only allow them. Using an HTTP tunnel allows me to synchronize my directories even behind an HTTP proxy when required.

-

Unison for file synchronization.

-

I use several well known security systems including a iptables firewall restringing every port but 80 and 443. Fail2ban is configured to ban attackers that failed to login into SSH or Apache services.

-

http-tunnel is a very simple http tunneling tool that work very well. I is available as a standard Debian package as well. I had a problem using it with unison though behind an HTTP proxy due to packets length. The solution for me has been to set the -c option to a high value :

htc **-c 100M** -F 1058 mydomain.com:80 . -

The cloud and laptop local data is stored encrypted using EncFS.

-

The server files are backed up using the excellent tool 'backup-manager'. EncFS makes the backup security free as I explained in the EncFS section. Naturally, the backups files have regularly to be saved into an external disk physically protected and located far away from the server in case of disaster or thief.

Final thoughts

I finally met all my requirements :

- Very cheap (disk price : 0.08€/GB at this day + 5.50€ / year of electricity for an average consumption of 4W + 60€ for the CubieBoard =~ 22€/year for 1TB of storage over a 5 years amortization period)

- Pretty safe solution. By security I mean mainly confidentiality, authentication and backup. All the data is stored at home, away from large Internet companies.

- Large storage space (1 TB).

- Very fast : synchronization usually lasts less that 2 min and has no significant effect on the client nor server CPU. It performs several orders of magnitude better than every solutions I tried before.

Bertrand Florat

Tech articles

Others articles

Projects

Cours

Contact

Bertrand Florat

Tech articles

Others articles

Projects

Cours

Contact