Hi ! This is my personal page and blog. You will

find here some articles or projects I'm involved in and a few thoughts (mainly about IT).

Hi ! This is my personal page and blog. You will

find here some articles or projects I'm involved in and a few thoughts (mainly about IT).

I design, code and integrate large IT projects. I like to work in agile environments to bring as much value as possible to my customers, while dealing with budgets and timelines. My main current positions are Solutions Architect on the first side of the coin, DevOps engineer on the other. I also teach various IT subjects including architecture at the university of Nantes (France).

Last technical articles :

Le jeudi 26 juin 2025, j'ai eu le plaisir d'être invité à un meetup Arkup (cabinet spécialisé en architecture des systèmes d'information) et de donner une présentation d'une heure sur le thème « Documentation d'Architecture As Code : Rendez votre documentation utile, vivante et collaborative ».

Le support est disponible ici.

This article has also been published at DZone.

In this previous article, I highlighted some of the worst mistakes a Product Owner (PO) can make.

Now, it’s time for introspection and an analysis of the most common errors I’ve observed in architectural practices throughout my career.

Disclaimer: I’ve personally made a fair share of these mistakes throughout my career ;-).

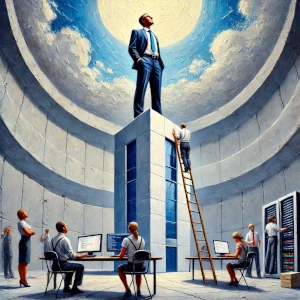

1. Being a “Seagull Architect”

This type of behavior has seriously damaged the reputation of our profession. Seagull Architects are highly concept-oriented but...

Je veux vous parler des bugs de demain, Sans supervision, il peut casser ton chemin. Je veux vous parler Des bugs, de vous. Je vois à l'intérieur des logs, des erreurs Qui ne sont pas traqués, qui parfois me font peur. Alertes Qui peuvent nous rendre fous Nos systèmes sont fragiles, nous, pauvres développeurs, Sans SRE pour guider, on perd tous nos repères. La sonde humaine, la charge, elle t'appartient. Tu as les alertes d'aucun de tes serveurs La sonde humaine, la charge, elle t'appartient Si tu laisses le système tomber dans l'incertain, C'est la...

IT integration involves configuring complex systems within large infrastructures to ensure all components work harmoniously. This challenging task requires a blend of coding skills and unique expertise. The following blueprints are derived from my pragmatic experience gained from the trenches of various large projects and apply to system integrators, DevOps engineers (with a focus on automation), and Site Reliability Engineers (SRE) who prioritize availability.

1. Less is Better

Distributed systems, such as microservice architectures, involve numerous configuration parameters, modules, and infrastructure components. Regular cleanups and refactoring are essential to avoid errors, cognitive fatigue, security risks,...

This article has also been published at DZone.

Updated: 03/11/2025

Murphy's Law ("Anything that can go wrong will go wrong, and at the worst possible time.") is a well-known adage, especially in engineering circles. However, its implications are often misunderstood, especially by the general public. It's not just about the universe conspiring against our systems; it's about recognizing and preparing for potential failures.

Many view Murphy's Law as a blend of magic and reality. As Site Reliability Engineers (SREs), we often ponder its true nature. Is it merely a psychological bias, where we emphasize...

As a Product Owner (PO), your role is crucial in steering an agile project towards success. However, it's equally important to be aware of the pitfalls that can lead to failure. In this blog post, we'll explore the actions that should be avoided to ensure your agile project stays on track and delivers valuable outcomes. It's worth noting that the GIGO (Garbage In - Garbage Out) effect is a significant factor: no good product can come from bad design.

This article has also been published at DZone.

On Agile and Business Design Skills

Lack...

This article has also been published at DZone.

In this article, I'll refer to a 'job' as a batch processing program, as defined in JSR 352. A job can be written in any language but is scheduled periodically to automatically process bulk data, in contrast to interactive processing (CLI or GUI) for end-users. Error handling in jobs differs significantly from interactive processing. For instance, in the latter case, backend calls might not be retried as a human can respond to errors, while jobs need robust error recovery due to their automated nature. Moreover,...

Datasets staticity level

[Article also published on DZone.]

A common challenge when designing applications is determining the most suitable implementation based on the frequency of data changes. Should a status be stored in a table to easily expand the workflow? Should a list of countries be embedded in the code or stored in a table? Should we be able to adjust the thread pool size based on the targeted platform?

In a current large project, we categorize datasets based on their staticity level, ranging from very static to more volatile:

Level 1 : Very static datasets

These types of...

Architecture as Code with C4 and Plantuml

(This article has also been published at DZone)

Introduction

I'm lucky enough to currently work on a large microservices-based project as a solution architect. I'm responsible for designing different architecture views, each targeting very different audiences, hence different preoccupations:

- The application view dealing with modules and data streams between them (targeting product stakeholders and developers)

- The software view (design patterns, database design rules, choice of programming languages, libraries...) that developers should rely upon;

- The infrastructure view (middleware, databases, network connections, storage, operations...) providing useful information for integrators and...

Bertrand Florat

Tech articles

Others articles

Projects

Cours

Contact

Bertrand Florat

Tech articles

Others articles

Projects

Cours

Contact